DoP Deep: visionOS and product opportunities in spatial computing

A deeper look at spatial computing and building a strategy for the future

🔒 DoP Deep articles are available for premium subscribers only. If you’d like to upgrade to receive them you can do so below. Or you can find out more about paid access here.

In last week’s briefing we led with the news of Apple’s first major product release in almost a decade, the Vision Pro.

As I mentioned at the time, there are still fundamental UX questions that need addressing:

What if I’m wearing makeup?

What if I have long hair?

Will I feel sick after using the headset for a longer time?

And most importantly, do people really want to strap a computer to their face?

Let’s set some of those concerns aside for a moment, and assume that Vision Pro sells enough units to become a legitimate platform in its own right. And let’s then consider, what opportunities does Apple’s new operating system visionOS and spatial computing open up for product teams?

First, let’s take a look at the design philosophy underpinning the new spatial operating system Apple is shipping with the device.

The design principles of visionOS

As is pretty standard for major new hardware launches at WWDC, Apple released a bunch of accompanying videos to help developers understand the design principles and ethos behind the product.

For Vision Pro, this means a brand new operating system, visionOS, and a set of design guidelines to match.

They are:

Familiar

Human-centered

Dimensional

Immersive

Authentic

Apple is doing its best to position their new device as a headset that seeks to respect your environment, rather than replace it.

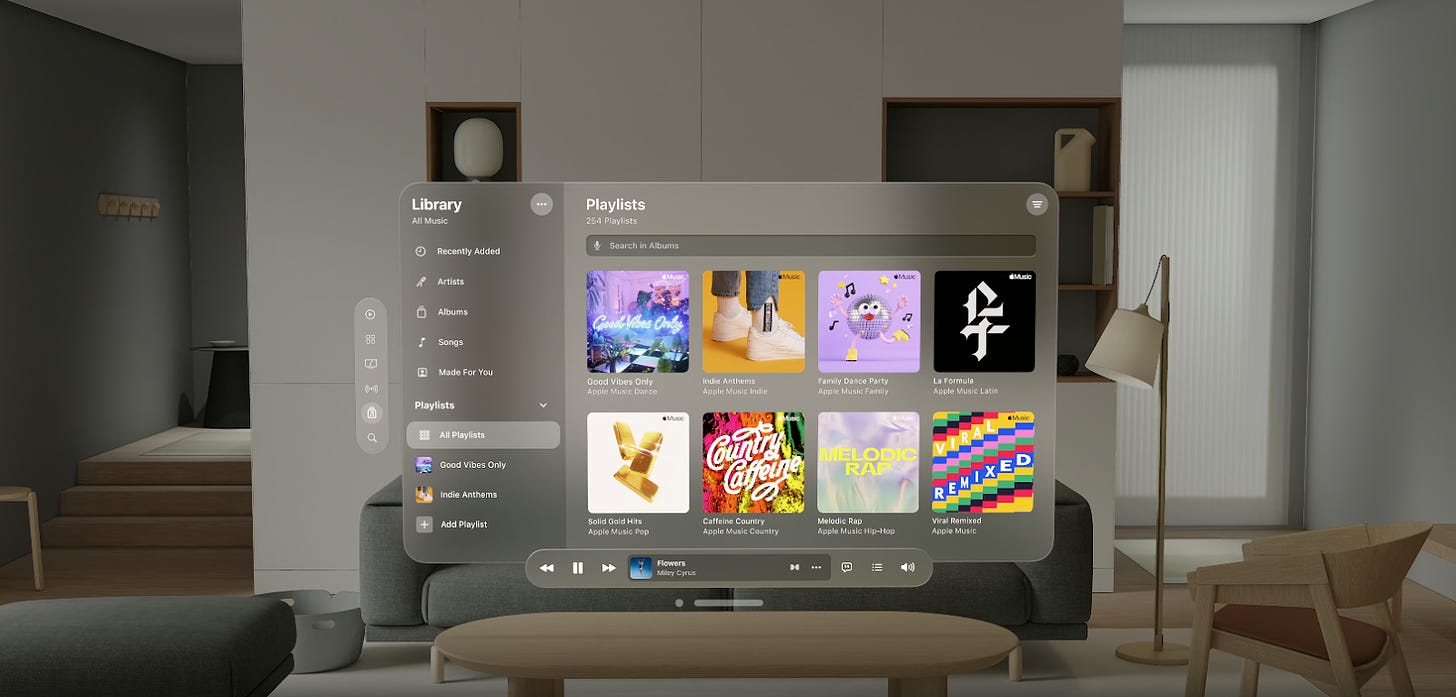

So, taking familiarity as a principle, for example, Apple takes a familiar concept, like a window interface for its Music app, and transforms it into a matt finished translucent glass panel that is familiar, but adapted to spatial computing.

This suggests that product teams will need to rethink their existing design systems, UI libraries and interaction models and re-purpose them for a spatial computing context.

New gestures

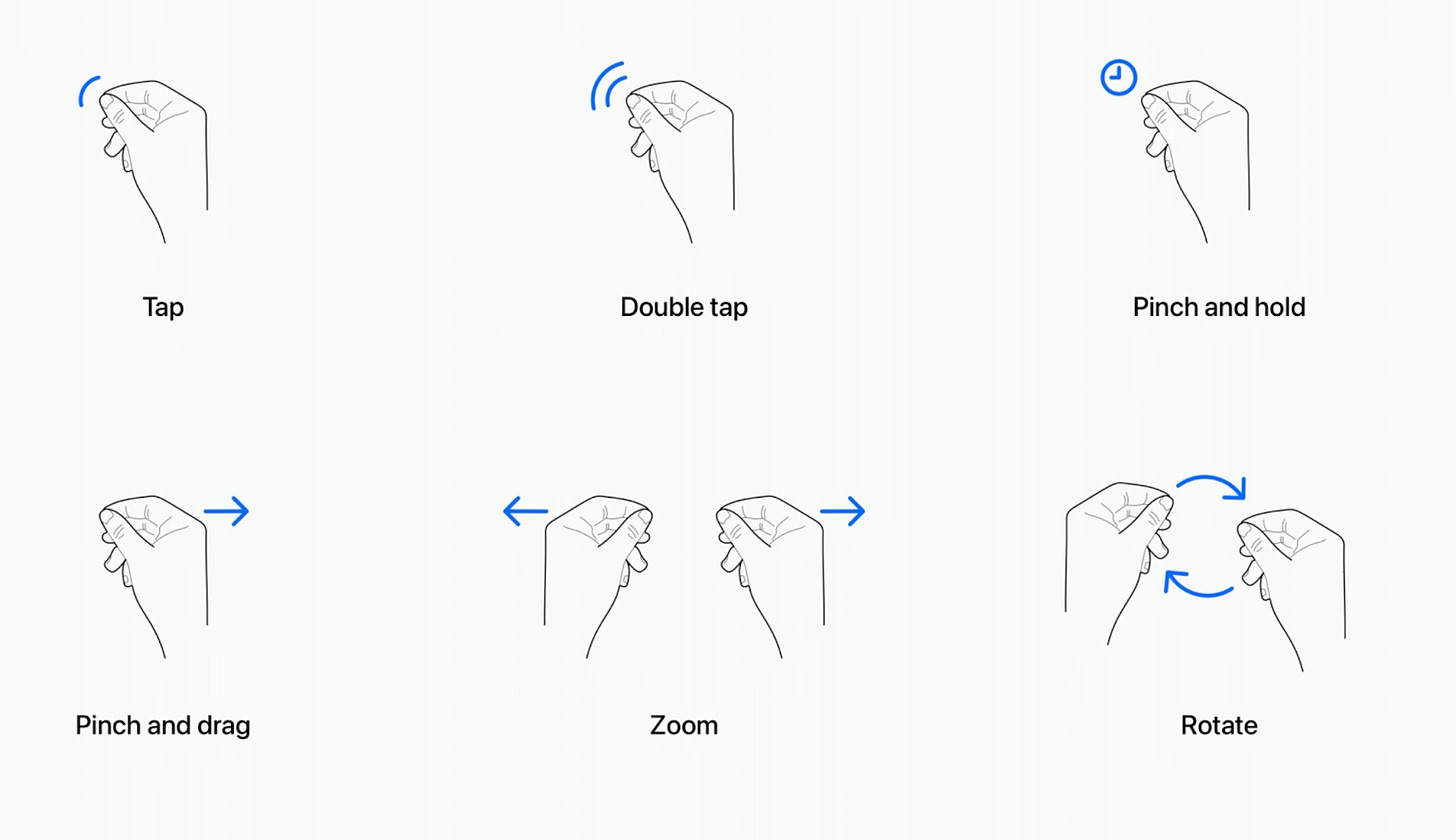

Alongside new design principles, visionOS also introduces a set of brand new gestures. These include: taps, double taps, pinch and hold, pinch and drag, zoom and rotate.

For a full deep dive into some of the most important abilities in visionOS check out this thread.

These gestures and design principles help shape the potential solutions product teams might build in visionOS. But it remains to be seen just how much these new gestures will get used, of course.

Apple Vision Pro supports traditional inputs like mice and keyboards and I have a gut feeling that just like the Wii and other motion based games consoles that came after it (Xbox Kinect, Playstation Move), once the novelty factor wears off, we’ll probably see a reversion back to traditional input devices.

We’re yet to see just how well visionOS supports those input types, so for now we’ll assume that users will use a mix of the two.

Given these principles and gestures, what spatial use cases actually exist?

Where spatial computing could fit into a person’s daily life

It’s been noted that a lot of Apple’s visuals in its keynote focused on productivity and, in stark contrast to Meta’s Quest marketing materials, the visuals used in Apple’s showcase were often pretty isolating; a single user sat alone with the headset strapped to their face, getting on with some work or watching a movie.