Perplexity takes on Claude Code and Anthropic reveals how its own teams use it internally

Plus: The AI Memo that everyone is talking about, How to use Google Opal's new features, Why millennials write the best prompts

Hi product people 👋,

This week, a single fictional AI memo sent markets into yet another frenzy about the potential impact of AI on the economy with companies like DoorDash, Uber and Mastercard in the spotlight. We’ll take a look at what the memo actually says, what it gets wrong, and what it means if you’re building products today.

Plus, an exciting new product from Perplexity sees it take on Claude Code, how Anthropic’s product teams actually use Claude internally and a new study reveals the design aesthetics of modern AI companies with some inspiration that you can use for your own products.

Have a great weekend!

Rich

Watch on YouTube | Follow on Substack Notes

Key reads and resources for product teams

New from the Department of Product Substack this week:

Knowledge Series - How to use Cursor for non-engineering use cases

This new guide shows non-engineers (PMs, designers, operators) how to turn Cursor into a practical AI workbench instead of “just a dev tool”. You’ll learn how to visually understand any codebase in minutes, spin up a native browser to test new features, wire up a product analytics pipeline that auto-flags anomalies and opens tickets, and use Claude Code with Figma’s MCP to capture real UIs as fully editable designs. If you’ve been hearing about Cursor and Claude but aren’t sure how they translate into your actual day-to-day work, this latest Knowledge Series guide should help.

New prompt in the library - Build a presentation with Micro-Animations using Google’s new Gemini 3.1 Pro

Use a single Gemini 3.1 Pro prompt to turn a static technical architecture diagram into an animated SVG that explains itself. It sequences each component in logical order, with data-flow pulses running along the arrows to show the system live - ideal for when you need to explain concepts to non-technical audiences or just bring them to life. (Department of Product)

Case studies - How Anthropic’s product teams actually use Claude

Anthropic hosted a live event in New York this week. In this video from the event, four Anthropic functional leaders from Finance, Legal, Sales, and Product show how their teams use Cowork and plugins in their daily work with demos that bring each use case to life. (Anthropic)

Opinion - Why vibe coding is the new product management

Product management has taken over coding. Vibe coding is the new product management. Instead of trying to manage a product or a bunch of engineers by telling them what to do, you’re now telling a computer what to do. And the computer is tireless. The computer is egoless, and it’ll just keep working. It’ll take feedback without getting offended. Investor and co-founder of Angellist Naval explains why product management has taken over coding. (Naval on X)

Process - Cursor’s CEO on the “Third Era of AI Software Development”

We’re shifting from “AI that helps you type code” to “AI that runs your software factory” where fleets of autonomous, cloud-based agents quietly build, test, and refine entire features in the background while humans focus on defining problems and reviewing outcomes.

Design - The Aesthetics of AI companies

This new report called “The Aesthetics of AI” has dissected the visual design of 23 AI companies from OpenAI and Anthropic to Perplexity and Mistral. It uncovers how brands make AI feel approachable ( off-white palettes and organic gradients) while standing out via 14 distinct styles including things like: Digital Impressionism’s moody blurs, Lomo’s analog grit, Quirky Cuteness mascots, ASCII pixel nostalgia, and more.

Payments - Stripe’s annual letter and the future of Agentic Payments

Stripe's annual letter explores several emerging payment themes including the rise of agentic payments. (Stripe)

Visions of the future - The 2028 Global Intelligence Crisis

This piece went viral this week. The “2028 Global Intelligence Crisis” is a fictional memo by Citrini Research imagining how AI reshapes the economy by 2028. Its core argument: vast amounts of enterprise value were built on human limitations, laziness, impatience, defaulting to familiar options. AI agents eliminate those limitations. For tech products, the implications are stark. SaaS tools built on workflow convenience (Asana, Zapier) face internal competition as building in-house becomes viable. Consumer apps like DoorDash lose their home-screen loyalty advantage when agents price-shop instantly. (Substack)

New product features and innovation this week

Perplexity has revealed a new product that it has been quietly working on over the past few months.

Perplexity Computer is a cloud-based “digital worker” that goes beyond answering questions to taking multi-step actions directly in the browser. From a single prompt, it can chain multiple capabilities together - moving you from an initial question all the way through to a finished output and follow-up actions in one shot.

Early examples shared after the launch include a fully functional Bloomberg-style terminal with real-time financial data and a web app tracking over 1,500 satellites in real time. Perplexity’s own designer even used it to help build the Computer landing page itself.

It’s positioned as a direct response to Claude Code’s dominance in the AI coding space, and it sits in the same category of tools that are blurring the line between asking and doing. As part of the release, Perplexity has published a gallery of the things you can do with it, including this example of using it to conduct competitor analysis of social media apps and transform the output into a data visualization chart and Excel spreadsheet:

Doordash and Google explore agentic task automation

DoorDash took one step towards a future where food ordering is handled by AI agents this week, with the release of a new beta feature in Gemini. This new feature works on new Android phones where you can long‑press the power button, tell Gemini what you want to eat, and it will handle the multi‑step ordering flow in apps like DoorDash/Uber Eats and similar services.

DoorDash’s CTO takes the threat - and opportunity - of agentic commerce seriously, posting on X:

The onus will be on us to create a compelling ecosystem for agents to participate in. Not only that, we’re going to need to ensure our merchant partners can do the same for their own customers. That’s why we’re so focused on the agentic commerce tools we build for ourselves and our merchant partners. The ground is shifting underneath our feet, and the industry is going to need to adapt to it.

Google Opal gets new agentic workflows

Google just shipped a pretty big Opal update that turns it from a simple vibe coding mini‑app builder into something much closer to an agentic workflow platform with memory and dynamic routing.

You can now describe tasks in text and let an Opal agent plan and execute multi‑step workflows (not just a fixed flow you designed). It uses Gemini 3 Flash, automatically picks tools (e.g., Sheets, web search) and decides next steps as it goes. Instead of scripting every branch, you describe goals and criteria, and the agent picks tools and paths, which is ideal for messy product tasks like “research this market and suggest next moves”.

🧠 Ideas on how you can use Google Opal for product work

If you’re not used Opal before, it’s a product from Google Labs and here are some experimental ideas for product teams on the kinds of things you can build with it:

Competitive and market snapshots: Build an Opal that takes a product/URL, searches the web, and outputs pros/cons, positioning, and pricing in a structured brief for PMs and execs

Customer / prospect briefing bots: Before a meeting, an Opal agent can fetch company background, recent news, and internal notes, then tailor a one‑pager based on whether it’s a new or existing customer

Async status and updates: Workflows that collect updates from Jira/Asana/Sheets, summarize them by squad/theme, and auto‑draft status reports for stakeholders

Churn signals watcher: An always‑on Opal agent that ingests product usage and billing data, scores churn risk per account, and maintains a live, prioritised risk list with natural‑language reasons and trends.

Cursor’s new “Demo” for evaluating new features with video

Cursor has released a new feature that allows AI Agents to record their work and share a video for verification. The “demo” feature is basically the agent screen‑recording its own work inside a cloud virtual and packaging that together with other artifacts (screenshots, logs) so you can review what it did without reproducing everything locally.

For product teams, this means Designers and PMs who might not be comfortable running the app locally can still review changes. They just watch the demo and leave comments, which helps speed up sign off on new features.

📈 Product data and trends to stay informed

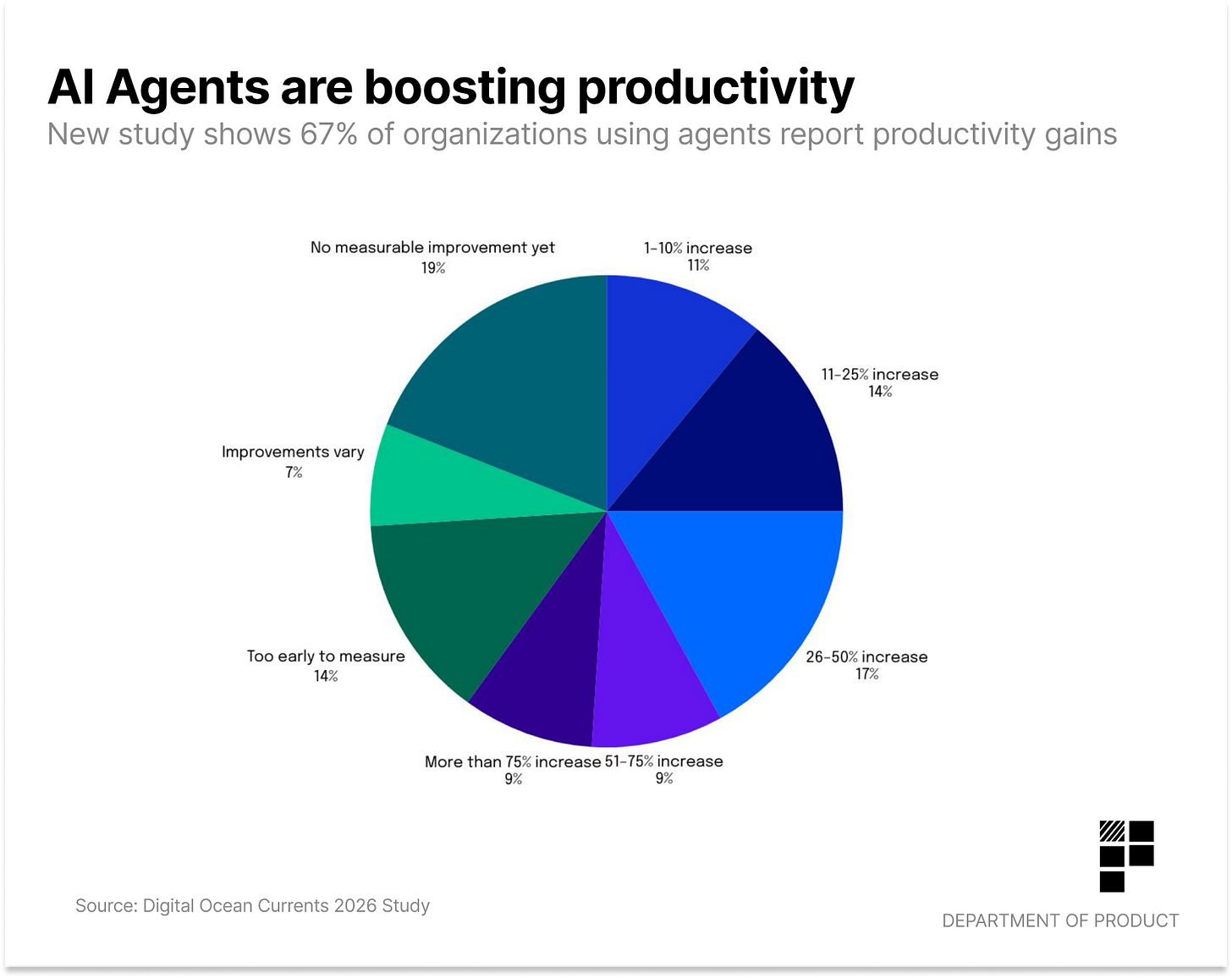

A new survey of over 1,100 developers and CTOs found that 60% of organizations using agents report at least some productivity gains. With code generation automating internal operations and customer support reported as the top 3 use cases.

Anthropic published a new report called The Code Modernization Playbook- and IBM’s stock dropped 13% as a result. The playbook walks through detailed, code-level examples of “agentic” modernization, including migrating from monolithic on‑prem systems to cloud‑native microservices, replacing legacy stacks like COBOL and shifting from waterfall and manual releases to CI/CD, automated testing, Infrastructure as Code and GitOps.

Some stats from the report which might be of interest to product teams:

developers spend 17.3 hours per week on technical debt, bad code, and maintenance instead of building

Nearly 70% of organizations cite technical debt as a primary inhibitor of innovation.

Companies that embrace digital transformation (including code modernization) have 14% higher market value than those that don’t

Over half of U.S. teens use AI chatbots for core tasks. 57% have used them to search for information, 54% to get help with schoolwork, and 47% for fun or entertainment. This new Pew Research Study is worth a read to keep on top of how young people are interacting with AI.

Millennials are the best AI prompters, according to a new report by Adobe. Millennials scored best on the objective prompt test with an average of 58/100, compared with Gen Z’s 56/100. The study also found that men are 80% more likely than women to believe that ALL CAPS “shouting” improves AI output.

Traffic to generative AI products drops significantly on weekends. ChatGPT reports the steepest decline at -16.8% while Grok reported the lowest declines on weekends at -7.9%.

Uber’s CEO says 90% of its engineers are now using AI in their work while about 30% are “power users” of AI tools, completely rethinking the architecture of the company. Some of Uber’s engineers cloned the CEO to prep for upcoming meetings.

Paid subscribers get the full DoP Substack including: The Knowledge Series for sharpening your tech / AI skills, the AI Prompt and Skills library and DoP Deep dive reports for in-depth analysis to learn lessons from the world’s top tech companies.

The Anthropic teams using Claude Code piece is the most interesting part. What I'd love to know: how detailed are their prompts?

I've been building with Claude Code daily for months. The quality gap between vague requests and specific briefs is enormous. Like, boring vs. interesting enormous.

Ran an actual experiment on this - 30 days, building apps every day, varying direction levels. The lesson was embarrassingly simple but easy to miss.

Full write-up: https://thoughts.jock.pl/p/directed-ai-experiments-vibe-business

Are you seeing similar patterns in how top teams brief their AI tools?